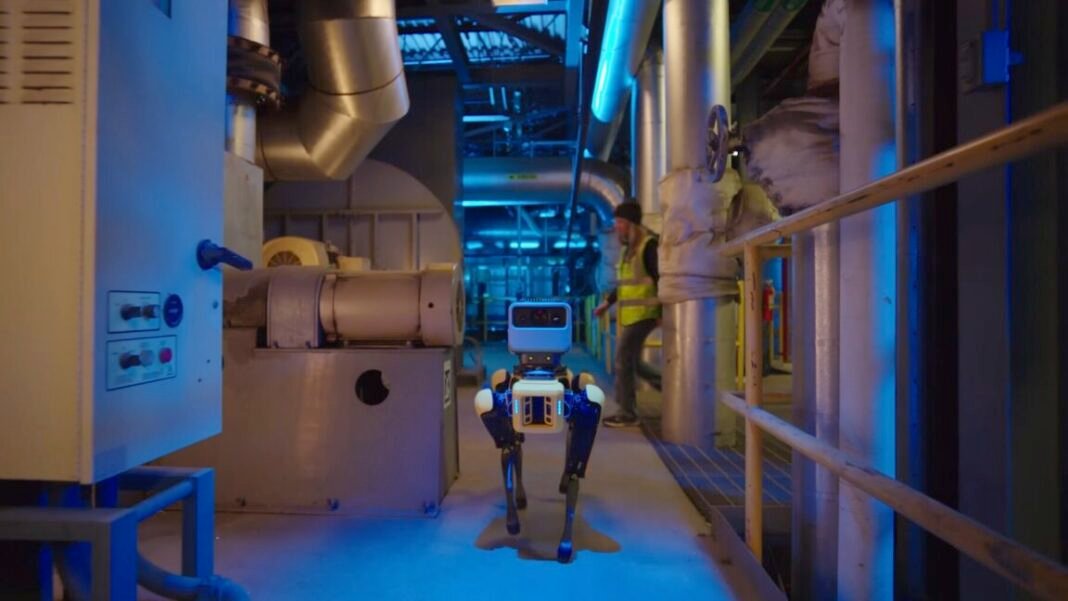

Robots such as Boston Dynamics’ four-legged Spot can now accurately read analog thermometers and pressure gauges while roaming around factories and warehouses. Those improvements come courtesy of Google DeepMind’s newest robotic AI model that aims to enhance robotic capabilities for ‘embodied reasoning’ when interacting with physical environments.

The new Gemini Robotics-ER 1.6 model announced on April 14 performs as a “high-level reasoning model for a robot” that can plan and execute tasks, according to Google DeepMind. This model also unlocks the capability of accurately reading instruments such as complex gauges and doing visual inspections using sight glasses that provide a transparent window to peek inside tanks and pipes—a performance upgrade that came about through Google DeepMind’s ongoing collaboration with robotics company Boston Dynamics.

Boston Dynamics has a keen interest in testing both quadruped and humanoid robotic workers in a wide range of industrial facilities, including the automotive factories of the robotic company’s corporate owner, Hyundai Motor Group. The company’s robot “dog,” Spot, is being trialled as a robotic inspector that roams throughout industrial facilities to check up on everything. Such inspection duties require “complex visual reasoning” to interpret the multiple needles, liquid levels, container boundaries and tick marks, along with text, in various instruments.

The model driving it

To handle such tasks, the Gemini Robotics-ER 1.6 model provides robots with “agentic vision” that combines visual reasoning with the capability of executing code to create a “visual scratchpad” for inspecting and manipulating images. Such agentic vision was introduced in Google’s Gemini 3.0 Flash model back in January 2026.

The agentic vision capability reportedly boosts robotic performance on instrument reading tasks from 23 percent in the older Gemini Robotics-ER 1.5 model to 98 percent in the new Gemini Robotics-ER 1.6 model. For comparison, Gemini 3.0 Flash delivered just 67 percent accuracy.

The baseline Gemini Robotics-ER 1.6 model can still achieve 86 percent accuracy in reading instruments even without agentic vision. That is because the model uses a process of pointing to different elements in a visual image to process complex tasks, such as counting items or identifying the most salient features. It also supposedly delivers an improved “multi-view reasoning” capability that allows a robotic system to use multiple camera streams to better understand its environment.

One performance example given by Google DeepMind highlights how Gemini Robotics-ER 1.6 could correctly identify the number of hammers, scissors, paintbrushes, pliers, and various gardening tools in a cluttered image. By comparison, the older Gemini Robotics-ER 1.5 model failed to accurately count hammers or paintbrushes, completely overlooked the scissors, and falsely identified a nonexistent wheelbarrow because that was one of the requested items in the identification task. That implies the newer model has less of a “hallucination” problem than the older one, even if the latest model is still far from achieving human-level comprehension of its surroundings.

Google also describes Gemini Robotics-ER 1.6 as its “safest robotics model yet,” with a “substantially improved capacity to adhere to physical safety constraints.” It enables robots to both follow safety instructions and to make safer decisions when handling liquids or materials. The new model can also more accurately perceive the risk of injury to humans in different scenarios, such as a young child sticking something into an electrical socket.

Future application

The practical test of this model’s value will come as robotics companies and researchers get more hands-on time to test its capabilities. So far, robots have proven most efficient and productive when performing as highly specialized machines doing the same specific tasks over and over again in factory assembly lines or performing highly coordinated and choreographed movements in warehouse aisles. Companies like Google are betting that the latest AI models can help robots become more free-range workers operating in complex and less controlled real-world environments—a prospect that also carries the greater risk of robots doing damage or harm to humans if something goes wrong.

At the very least, the latest model may nudge us one step closer to a future where a General Atomics International Mark 4 robot can scan the room and correctly exclaim, “There’s no fudge here!”