If you’ve been using the Internet for any length of time, you’ve probably used a tool like Google Translate to convert webpages or snippets of text to and from languages ranging from Uzbek to Esperanto. But what if you want to translate into more esoteric “languages” like “LinkedIn Speak,” “Gen Z slang,” or “horny Margaret Thatcher”?

This week, many people across the Internet have been bemused to find that the AI-powered Kagi Translate can perform these and countless other unlikely “translation” tasks. And while the collective discovery highlights the playful, creative side of large language models, it also exposes the risks of letting users play with generalized LLM tools.

What is a “language,” really?

While you might know Kagi best as the paid competitor to Google’s ever-worsening search product, the company launched its Kagi Translate tool back in 2024, saying at the time that it was a “simply better” competitor to tools like Google Translate and DeepL. At launch, the company said Kagi Translate “uses a combination of LLMs, selecting and optimizing the best output for each task,” a fact that “can occasionally lead to quirks that we’re actively working to resolve.”

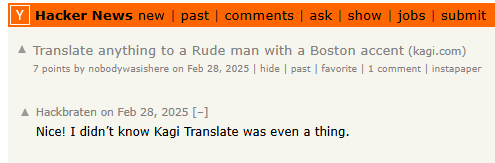

The first versions of the tool featured simple dropdown menus to choose from 244 different languages for the source and target of the translation. In February 2025, though, at least one unheralded Hacker News poster noticed that you could play with the URL parameters to set the target language to “rude man with a Boston accent” without breaking anything.

In recent weeks, Kagi’s own social media account has highlighted the service’s ability to imitate “Reddit Speak” or generate McKinsey consultant speak with a few clicks on Kagi Translate. Early Tuesday morning, though, these unorthodox use cases broke containment after a Hacker News user delighted in reporting that “Kagi Translate now supports LinkedIn Speak as an output language.” Further down in that popular HN thread, other users noticed that you can alter the output language just by typing into the search bar of Kagi Translate’s web interface, and the tool’s underlying AI would do its best to accommodate you.

From there, it was off to the races, with forum-goers and social media users rushing to test their wildest “translation language” ideas. Some tried to make a point by using the tool for media criticism, for obvious political jokes, for making fun of science deniers, or for calling out billionaires (editing the “input language” has some interesting effects in that last example). Others sought to emulate the voices of famous figures with distinct speaking styles, from Carl Sagan and Slavoj Zizek to Werner Herzog and Morgan Freeman.

Still others went even sillier, asking for translations into “tiny little kitten” or targeting programming languages (watch out, Claude Code). Of course, Kagi’s social media team leaned into the fun, encouraging people to use the “LinkedIn Speak” translations to “fit right into that crowd.”

Remember when LLMs were fun?

In a way, this all feels like a return to the early days of ChatGPT, when people still marveled at the seemingly miraculous capabilities of these new transformer-based text engines. Back then, you could get some Internet content mileage out of simply asking an LLM to write a decent imitation of Vogon poetry or imitate the style of a PowerPC vs Intel forum debate circa 2000, for instance. It was a fun party trick that everyone wanted to share with their Internet friends.

that kagi translate thing is great

— austin (@aparker.io) March 17, 2026 at 8:19 PM

In the intervening years, of course, an unignorable AI hype train has transformed these LLMs from fun toys into the linchpin of the entire tech industry—one that industry titans keep saying will take most of our jobs or create a civilization-threatening superintelligence any day now. By putting that kind of LLM into a silly translation container, Kagi Translate brings back LLMs’ whimsical ability to synthesize language patterns in some truly creative ways.

And unlike Google’s hallucinated Overviews or AI therapy bots giving bad advice, the risk of harm here seems minimal. No one is going to mistake Kagi Translate for an all-knowing oracle or a replacement for your entire software engineering department. It’s just a fun toy that lets the Internet at large play with language in a way that would have been practically unthinkable just five years ago.

Even this kind of toy-like implementation of an LLM could benefit from some guardrails, though. Asking Kagi Translate to emulate “someone who keeps saying slurs” can result in outputs that a company like Kagi might not want to be associated with. This is what happens when you don’t sanitize your LLM inputs, people!