These days, it seems like every tech company and their corporate parent is looking to squeeze AI tools and features into their products, whether they’re wanted or not. So when files with names and functions referencing a “SteamGPT” appeared in a recent Steam client update, Valve watchers took quick notice.

From the outside, it’s hard to tell precisely what form any such “SteamGPT” would take. But looking through variable names and references in the files themselves suggests that Valve may be looking to use AI tools to streamline internal evaluations of in-game incidents and sift through potentially suspicious accounts.

Looking at the variables

As tracked by the automated SteamTracking GitHub project, the term “SteamGPT” appears multiple times in three separate files added in the April 7 Steam client update. In addition to the SteamGPT naming convention—a seemingly obvious reference to the generative pre-trained transformers popularized by ChatGPT and its ilk—the files include mentions of terms like multi-category inference, fine-tuning, and “upstream models” that point to some sort of generative AI system.

What might that AI be used for? Well, the files contain multiple references to a labeler and “labeling tasks,” working with arguments identifying a “problem” and “subproblem” and looking at an “evaluation_evidence_log” related to a specific “matchid.” Together with mentions of a “logs_to_inference” metamodel, that sounds like it could be a hook into a system for automatically generating labels to categorize the various incident reports made in Steam multiplayer games.

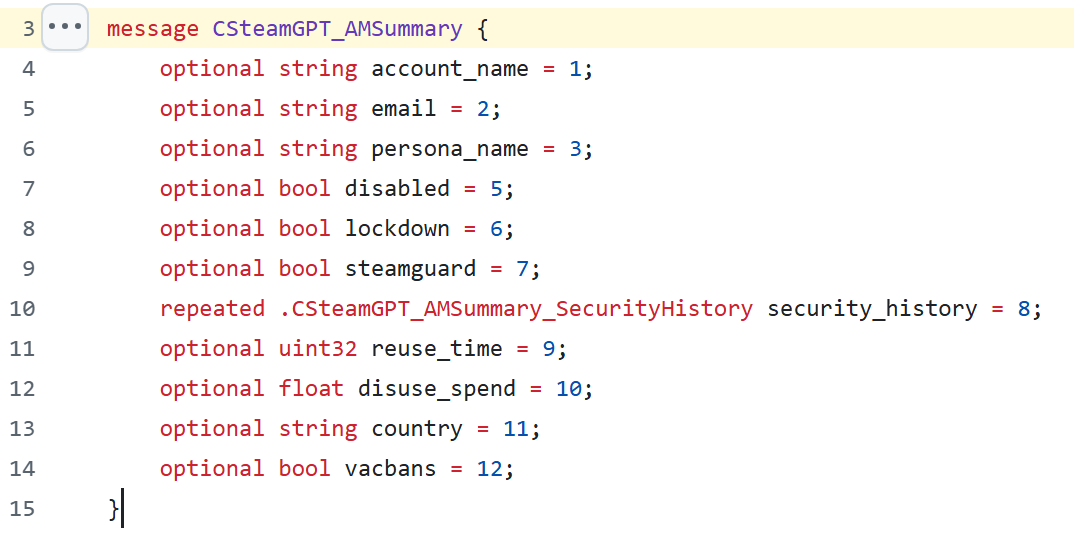

Another portion of the SteamGPT files hints that Valve might use AI tools to summarize suspicious activity history or patterns in potentially fraudulent Steam accounts. A number of “SteamGPTSummary” functions include references to VAC bans, Steam Guard, and account lockdowns. These functions also seem to look at evidence such as email addresses (“high_fraud_email”), use of advanced security features (“two_factor”), and where a linked phone number originates (“phone_country”) to help determine whether an account is on the up-and-up. There are also a few references here to an account’s trust score, which is already used to help secure matchmaking in games like Counter-Strike 2.

AI is the future, you know

While these naming conventions are suggestive, they don’t necessarily indicate how “SteamGPT” would work or if the prototype functions are even used in the current version of Steam (or will be in future versions). Still, taken together, this looks less like a player-facing AI model (à la Microsoft’s Gaming Copilot) and more like an AI tool to help Steam moderators sift through mountains of incident logs and potentially suspect accounts.

It wouldn’t be a shock if Valve embraced AI tools in this way. In a short video posted last year, Valve founder and CEO Gabe Newell compared the growth of AI to the rise of spreadsheets and the Internet, saying that “it’s incredibly obvious that machine learning systems, AI systems, are going to profoundly impact pretty much every single business.” In another video, he suggested that new programmers who learn how to use AI tools as a scaffold “will become more effective developers of value than people who’ve been programming for a decade.”

In 2024, Valve started explicitly allowing the use of AI tools in the development of games published on Steam, provided that use is disclosed to players. By mid-2005, that disclosure had appeared on nearly 8,000 Steam titles, according to a study by Totally Human Media, including 20 percent of the games released to that point that year.